Authors:

Leandro Morera

Abstract:

A pyramidal model of integration for NLP networks is a huge model that incorporates the information to analyze by the ratio of 2exp(N) for N layers. The model uses the duality time/status for the main categories' duality cat_1/cat_2 maintaining the information along the upper branch and incorporating the new information in the lower branch. We provide an example of setting the model to process information for L2/L3 support levels in an ordered mode. We train that configuration to predict the time of solution and final status from the actual status in the resolution of one issue.

Background:

This invention is useful when we require deeper research to resolve customer issues. The research can take many paths to get the same final solution. This invention can be used to predict the time and final status of customer service based on stored experience and the actual status of the issue resolution. Our proposition makes easy incorporation of information to analyze a huge model that increases in the ratio of 2exp(N) categories for N layers in a pyramidal structure of interconnection while maintaining the main information in the upper converging branches.

The actual solution today to this trouble is performed manually using databases and the service provider's experience. The process is not automatic for software in L2/L3 support. We do not have an assistant giving help automatically for issues resolution using NLP with structured data incorporation.

In the patent https://patents.google.com/patent/WO2019115200A1/, the authors propose an NLP method for the efficient assembling of natural language inference with parallel responses and weights. In our model, a pyramidal interconnection provides the interaction between NLP cells that composes a model that increases the information by layer with a ratio of 2exp(N) for N layers.

Description:

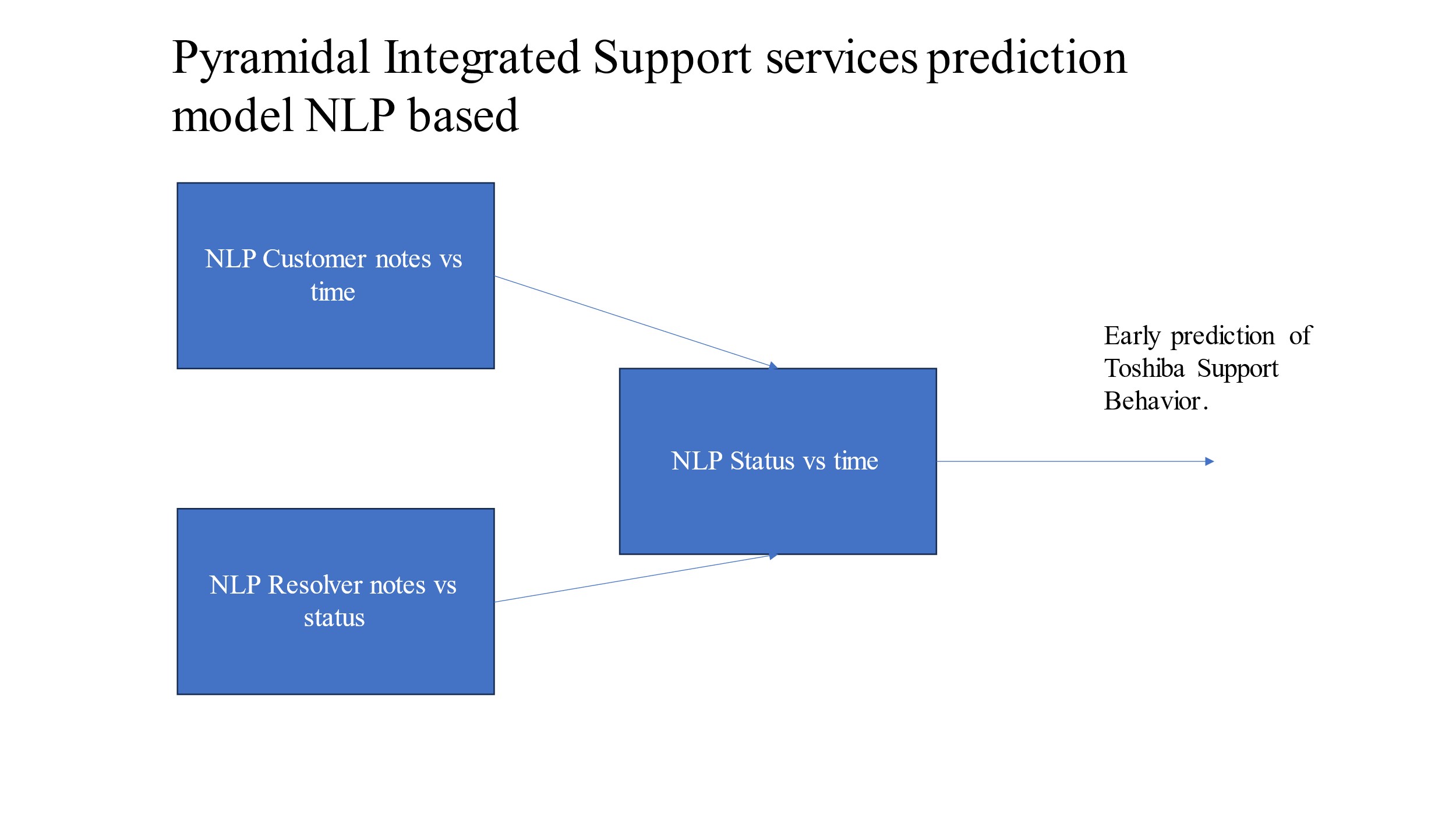

For this invention, one or more different NLP networks are selected to apply as a little particle for a huge system. Each selected little network belongs to a particle and has the properties of being trained in supervised mode with the hot encoding of data. The NLP particles are grouped in three and connected in a pyramidal way where the upper converging branch maintains the information along the next layers. The lower branch incorporates always a new category of information. As we always increase by a factor of two, we use the concepts of time/status as the element that tight each independent particle cell concerning the new category of information. In this way, the first basic interconnection of Figure 1 contains the two main converging information for the corner of the huge pyramidal model.

Fig 1. Basic interconnection structure.

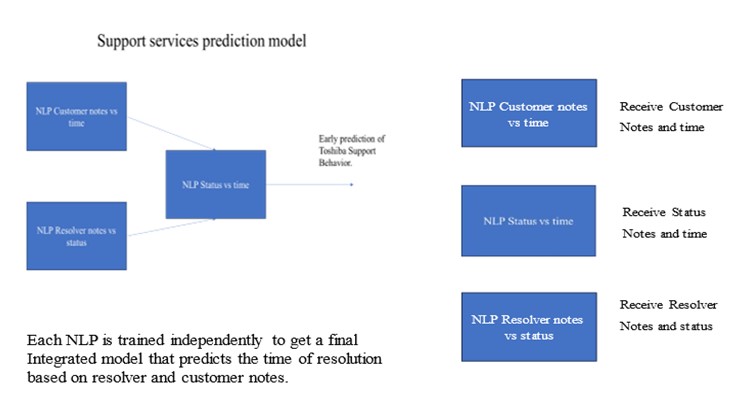

The model increments based on the pairs cat_1/status and cat_2/time. For a support service, the duality time/status is associated directly with the new data duality customer_notes/resolver_notes in the last converging layer. The information to consider is grouped with clustering techniques attending to categories and is maintained along the upper converging branch when the model increases. The data is selected for the first corner of the huge model as indicated the Fgure 2.

Fig 2. Data selection for the NLP particle cell at the network corner of the huge model.

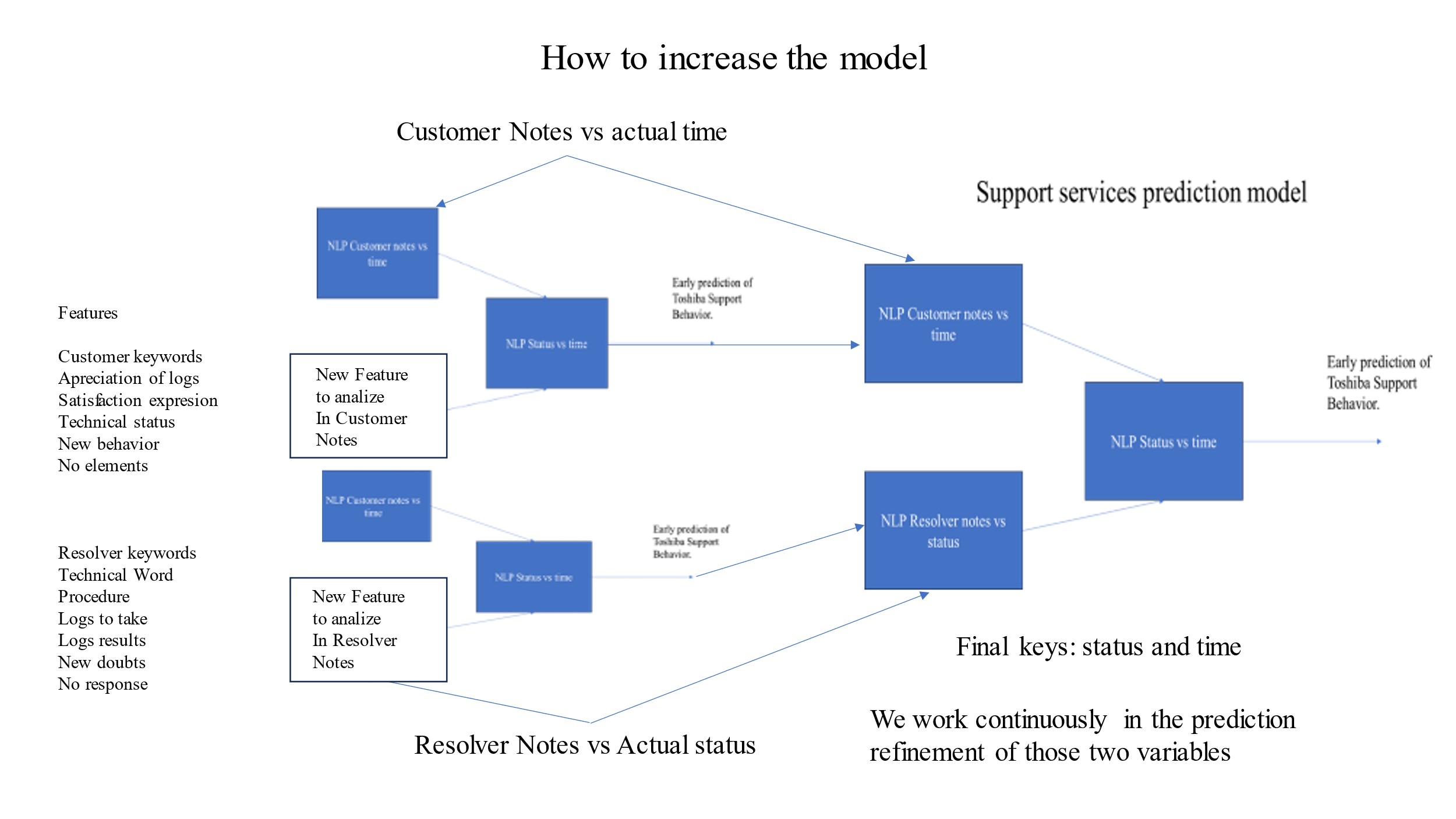

The model increases in the ratio of 2exp(N) categories for N layers in a pyramidal structure of interconnection maintaining the main information in the upper converging branches and adding the new information in the lower converging branch as indicated in Figure 3.

The system grows and incorporates the new categories of information in the new layers added to the huge model. Each particle cell is trained independently or interconnected in supervised mode with a hot data encoding of the information.

Fig. 3 The model increases according to the pyramidal structure of interconnection with categories incorporation of ratio 2exp(N) for N layers. The main information is maintained in the upper converging branch and the new information is added in the lower branch.

Usages:

- This proposition is useful when we require deeper research to resolve customer issues and your research can take many forms and ways to get the same final solution, one of the solutions can take a shorter path than others. It is useful to have an assistant for prediction based on actual status.

- It is a model to understand a group of variables in the relations of different fields of support services information based on Natural Language processing.

- We provide a way to increase the model to make the implementation easier and the management of data considered. The prediction model interconnected can give you a new understanding of the service's interactions.

- We can get an orientation based on experience about the history of what we have worked in the support services.

- You can evaluate the probability of final time and status before submitting your response to the customer without needing to include more resources in the research like other people.

- Also, we can get predictions about the final status and time of resolution of some defects based on the actual status.

Claims:

- A Pyramidal model of NLP integration with particles in triangular compositions as basic elements.

- Obtain a huge NLP model increasing from particles that incorporates 2exp(N) categories by layer N.

- Training of NLP particles in interconnected or unconnected configurations.

- The model increments based on the pairs cat_1/status and cat_2/time.

- Use the status/time evaluation per category before the integration into the pyramidal network.

- Controlled and progressive data incorporation maintaining information in converging branches.

- The particle of the model accepts any NLP internal configuration with hot encoding and supervised learning.

Enabling Technology:

- Natural Language Processing (NLP)

- CRM and CMS integration for L2/L3 support levels.

- API for artificial intelligence assistance.

TGCS Reference 2991